Part V: Bringing Up Cluster With Kubespray

Now that everything is ready, we can use ansible to bring up the cluster with kubespray. The cluster.yml playbook will check to make sure all the dependencies are present on the nodes, versions are correct, and will proceed to install kubernetes on the cluster, as defined by the hosts.yaml you’ve created. Move to the kubespray area, and run the cluster.yaml playbook:

cd ~/workspace/picluster

poetry shell

cd ../kubespray

ansible-playbook -i ../picluster/inventory/mycluster/hosts.yaml -u ${USER} -b -v --private-key=~/.ssh/id_ed25519 cluster.yml

It takes a long time to run, but has a lot to do! With the verbose flag, you can see each step performed and whether or not things were changed or not. At the end, you’ll get a summary, just like on all the other playbooks that were invoked. Here is the end of the output for a run, where I already had a cluster (so things were setup already) and just ran the cluster.yml playbook again.

PLAY RECAP ******************************************************************************************************************************************************************************** cypher : ok=658 changed=69 unreachable=0 failed=0 skipped=1123 rescued=0 ignored=0 localhost : ok=3 changed=0 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0 lock : ok=563 changed=44 unreachable=0 failed=0 skipped=1005 rescued=0 ignored=0 mouse : ok=483 changed=50 unreachable=0 failed=0 skipped=717 rescued=0 ignored=0 niobi : ok=415 changed=37 unreachable=0 failed=0 skipped=684 rescued=0 ignored=0 Sunday 31 December 2023 10:10:12 -0500 (0:00:00.173) 0:21:31.035 ******* =============================================================================== container-engine/validate-container-engine : Populate service facts --------------------------------------------------------------------------------------------------------------- 99.19s kubernetes-apps/ansible : Kubernetes Apps | Start Resources ----------------------------------------------------------------------------------------------------------------------- 46.85s etcd : Reload etcd ---------------------------------------------------------------------------------------------------------------------------------------------------------------- 35.10s etcd : Gen_certs | Write etcd member/admin and kube_control_plane client certs to other etcd nodes -------------------------------------------------------------------------------- 34.06s kubespray-defaults : Gather ansible_default_ipv4 from all hosts ------------------------------------------------------------------------------------------------------------------- 27.37s network_plugin/calico : Start Calico resources ------------------------------------------------------------------------------------------------------------------------------------ 26.65s download : Download_file | Download item ------------------------------------------------------------------------------------------------------------------------------------------ 25.50s policy_controller/calico : Start of Calico kube controllers ----------------------------------------------------------------------------------------------------------------------- 17.93s network_plugin/calico : Check if calico ready ------------------------------------------------------------------------------------------------------------------------------------- 17.34s kubernetes-apps/ansible : Kubernetes Apps | Lay Down CoreDNS templates ------------------------------------------------------------------------------------------------------------ 17.28s etcd : Gen_certs | Gather etcd member/admin and kube_control_plane client certs from first etcd node ------------------------------------------------------------------------------ 16.50s download : Download_file | Download item ------------------------------------------------------------------------------------------------------------------------------------------ 14.33s download : Check_pull_required | Generate a list of information about the images on a node --------------------------------------------------------------------------------------- 12.78s container-engine/containerd : Containerd | restart containerd --------------------------------------------------------------------------------------------------------------------- 12.36s download : Check_pull_required | Generate a list of information about the images on a node --------------------------------------------------------------------------------------- 12.13s etcd : Gen_certs | run cert generation script for etcd and kube control plane nodes ----------------------------------------------------------------------------------------------- 11.75s download : Check_pull_required | Generate a list of information about the images on a node --------------------------------------------------------------------------------------- 11.47s download : Check_pull_required | Generate a list of information about the images on a node --------------------------------------------------------------------------------------- 11.46s network_plugin/calico : Calico | Create calico manifests -------------------------------------------------------------------------------------------------------------------------- 11.23s download : Download_file | Download item ------------------------------------------------------------------------------------------------------------------------------------------ 10.81s

If things are broken, you’ll need to go back and fix them and try again. Once it is working, though, we can now get the kube configuration file, so that we can run kubectl commands (we installed kubectl on the Mac in step IV). I use a script (at ~/workspace/picluster) to make this easy to do:

../setup-kubectl.bash

The contents of the script are:

CONTROL_PLANE_NODE=cypher

CONTROL_PLANE_NODE_IP=10.11.12.198

ssh ${CONTROL_PLANE_NODE} sudo cp /etc/kubernetes/admin.conf /home/${USER}/.kube/config

ssh ${CONTROL_PLANE_NODE} sudo chown ${USER} /home/${USER}/.kube/config

mkdir -p ~/.kube

scp ${CONTROL_PLANE_NODE}:.kube/config ~/.kube/config

sed -i .bak -e "s/127\.0\.0\.1/${CONTROL_PLANE_NODE_IP}/" ~/.kube/config

You’ll need to change the CONTROL_PLANE_NODE with the name of one of the control plane nodes, and CONTROL_PLANE_NODE_IP with that node’s IP address. Once this command is run, the config file will be set up to allow the kubectl command to access the cluster.

Next up in the series will be adding shared storage, a load balancer, ingress, monitoring, etc. Below are some other operations that can be done for the cluster.

Upgrading Cluster

This is a two step process, depending on what version you want to get to with Kubernetes, and what release of kubespray you are running. Each release of kubespray will have a tag and will correspond to a kubernetes version. You can see the tags with:

git tag | sort -V --reverse v2.23.1 v2.23.0 v2.22.1 v2.22.0 v2.21.0 ...

Alternately, you can just use a specific commit or the latest on the master branch. Once you decide which tag/commit you want, you can do a checkout for that version:

git checkout v2.23.1 git checkout aea150e5d

For whichever tag/commit you use, you can find out the default kubernetes and calico plugin (what I chose for networking), by doing grep commands from the repo area (you can look at specific files, but some times these are stored in different places):

grep -R "kube_version: " grep -R "calico_version: "

Please note that, with kubespray, you have to upgrade by major release, and cannot skip releases. So, if you want to go from tag v2.21.0 to v2.23.1, you would need to update to v2.22.0 or v2.22.1, and then v2.23.1.0. If you are using a commit, just see what the previous tag was for the commit and then update tags to that tag and then you’ll be all set.

Initially, I ended up using a non-tag version of kubespray because I wanted kubernetes 1.27, and the nearest release tag at the time was v2.22.1, which used kubernetes 1.26.5. I ended up using a commit on master that gave me 1.27.3.

As of this writing, the newest tag is v2.23.1, which is 9 weeks ago, uses kubernetes 1.27.7. I just grabbed the latest on master, which supports kubernetes 1.28.5 (you can see that in commit message):

git show HEAD:inventory/sample/group_vars/k8s_cluster/k8s-cluster.yml | grep kube_version kube_version: v1.28.5

Granted, you may want to stick to tagged releases (it’s safer), or venture into newer versions, with newer kubernetes. However, you still need to update by a major release at a time with kubespray.

To update kubespray, from ~/workspace/kubernetes/kubespray/ I did the following:

- Saved my old inventory: mv ~/workspace/kubernetes/picluster/inventory/mycluster{,.save}

- Did a “git pull origin master” for the kubespray repo and checked out the version I wanted (either a tag, latest, etc).

- Copied the sample inventory: cp -r inventory/sample ../picluster/inventory/mycluster

- Updated files in ../picluster/inventory/mycluster/* from the ones in mycluster.save to get the customizations made. This includes hosts.yaml, group_vars/k8s_cluster/k8s-cluster.yml, group_vars/k8s_cluster/addons.yml, other_servers.yaml, and any other files you customized.

- I set the kubernetes_version in group_vars/k8s_cluster/k8s-cluster.yml to the version desired, as this was a customized item that was older.

In my case, the default calico version would be v3.26.4 (before I had v3.25.2 overridden), and kubernetes v1.28.5 (before I had v1.27.3).

Use the following command, to upgrade the cluster, using the new kubespray code and kubernetes version:

ansible-playbook -i ../picluster/inventory/mycluster/hosts.yaml -u ${USER} -b -v --private-key=~/.ssh/id_ed25519 upgrade-cluster.yml

When I did this, I ended up with Kubernetes 1.28.2, instead of the default 1.28.5 (not sure why). I ran the upgrade again, only this time I specified “kube_version: v1.28.5” in the ../picluster/inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml as an override, but it still was using v1.28.2.

Ref: https://kubespray.io/#/docs/upgrades

Adding a node

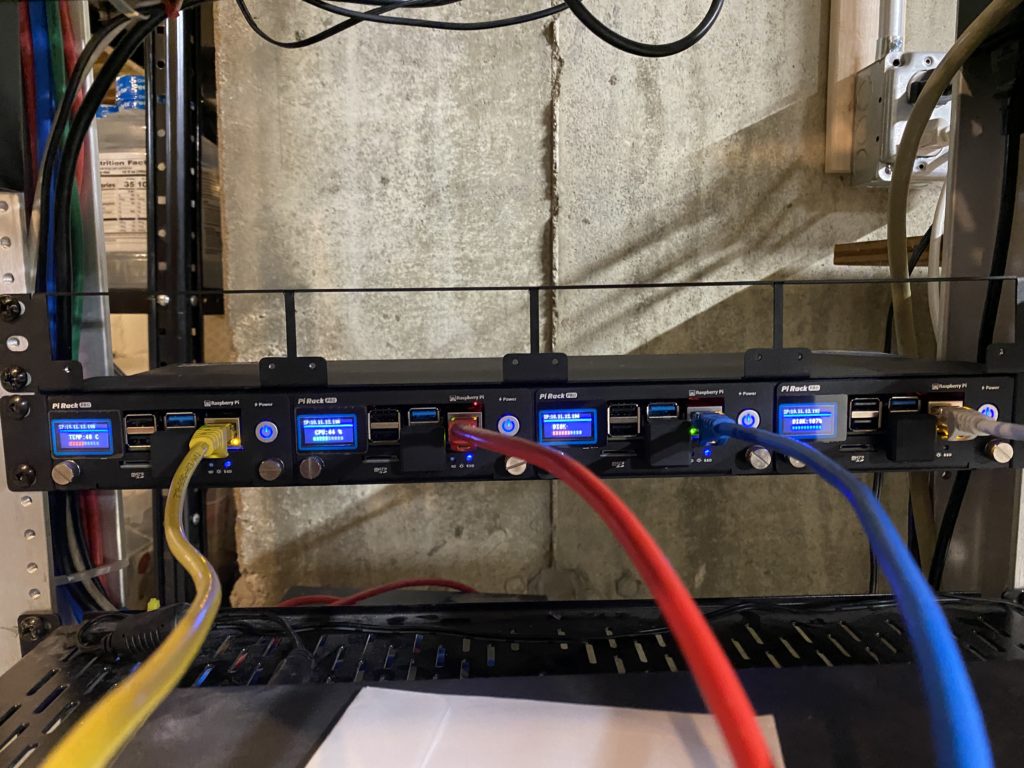

I received another Raspberry PI 4 for Christmas and wanted to add it to the cluster. I followed all the steps in Part II to place the Ubuntu on the PI, Part III to repartition the SSD drive, Part IV to add the new host to hosts.yaml and then ran the ansible commands just for the node I was adding to setup the rest of the items needed.

To add a control plane node, update the inventory (adding the node definition, and adding the node name to the control plane list and list of nodes) and run the kubespray cluster.yml script:

ansible-playbook -i ../picluster/inventory/mycluster/hosts.yaml -u ${USER} -b -v --private-key=~/.ssh/id_ed25519 cluster.yml

Then, restart the nginx-proxy pod, which is the local proxy for the api server. Since I’m using containerd, run this on each worker node:

crictl ps | grep nginx-proxy | awk '{print $1}' | xargs crictl stop

To add a worker node, update the inventory (adding the node definition, and adding the node name to the node list) and run the kubespray scale.yml script:

ansible-playbook -i ../picluster/inventory/mycluster/hosts.yaml -u ${USER} -b -v --private-key=~/.ssh/id_ed25519 --limit=${TARGET_NODE} scale.yml

Use the limit arg to not disturb the other nodes.

Ref: https://kubespray.io/#/docs/nodes

Tear Down Cluster

To tear down the cluster, you can use the reset.yml playbook provided:

ansible-playbook -i ../picluster/inventory/mycluster/hosts.yaml -u ${USER} -b -v --private-key=~/.ssh/id_ed25519 reset.yml

Side Bar

Old Tools…

On one attempt, after having updated the kubespray repo to the latest version, the cluster.yaml failed because ansible version I was using was too old:

TASK [Check 2.15.5 <= Ansible version < 2.17.0] *******************************************************************************************************************************************

fatal: [localhost]: FAILED! => {

"assertion": "ansible_version.string is version(minimal_ansible_version, \">=\")",

"changed": false,

"evaluated_to": false,

"msg": "Ansible must be between 2.15.5 and 2.17.0 exclusive - you have 2.14.13"

}

Doing a “poetry show”, I could see what I had for ansible and one of the dependencies, ansible-core:

ansible 7.6.0 Radically simple IT automation ansible-core 2.14.13 Radically simple IT automation

To update, I used the command “poetry add ansible@latest”, which would reinstall the latest version and update all the dependencies:

Using version ^9.1.0 for ansible Updating dependencies Resolving dependencies... (0.3s) Package operations: 0 installs, 2 updates, 0 removals • Updating ansible-core (2.14.13 -> 2.16.2) • Updating ansible (7.6.0 -> 9.1.0) Writing lock file

If desired, you can do a “poetry search ansible” or “poetry search ansible-core” to see what the latest version is, and you can always specify exactly which version you want to install. That’s the beauty of poetry, in that you can fix specific versions of a package, so that things are repeatable.

Mismatched Calico/Kubernetes Versions

I had a case where my cluster was at kubernetes v1.27.3 and v3.25.2 Calico. The kubespray repo had a tag of v2.23.1, which called out v1.27.7 kubernetes and v3.25.2 Calico. Things were great.

I tried to update kubespray to latest on master branch, which defaults to kubenetes v1.28.5 and v3.26.4. However, I still had v3.25.2 Calico in my customizations (with kubernetes updated to call out v1.28.5). The cluster.yml playbook ran w/o issues, but the calico-node pods were not up and were in a crash loops. The install-cni container for a calico-node pod was showing an error saying:

Unable to create token for CNI kubeconfig error=serviceaccounts "calico-node" is forbidden: User "system:serviceaccount:kube-system:calico-node" cannot create resource "serviceaccounts/token" in API group "" in the namespace "kube-system"

Even though kubernetes v1.28.5 is supported by Calico v3.25.2, there was some incompatibility. I haven’t figured it out, but I saw this before as well, and the solution was to either use the versions called out in the commit being used for kubespray, or at least near that version for kubernetes. By using the default v3.26.4 Calico, it came up fine.

Also note that even though I specified kubernetes v1.28.5, in my customization (which happened to be the same as the default), I ended up with v1.28.2 (not sure why).